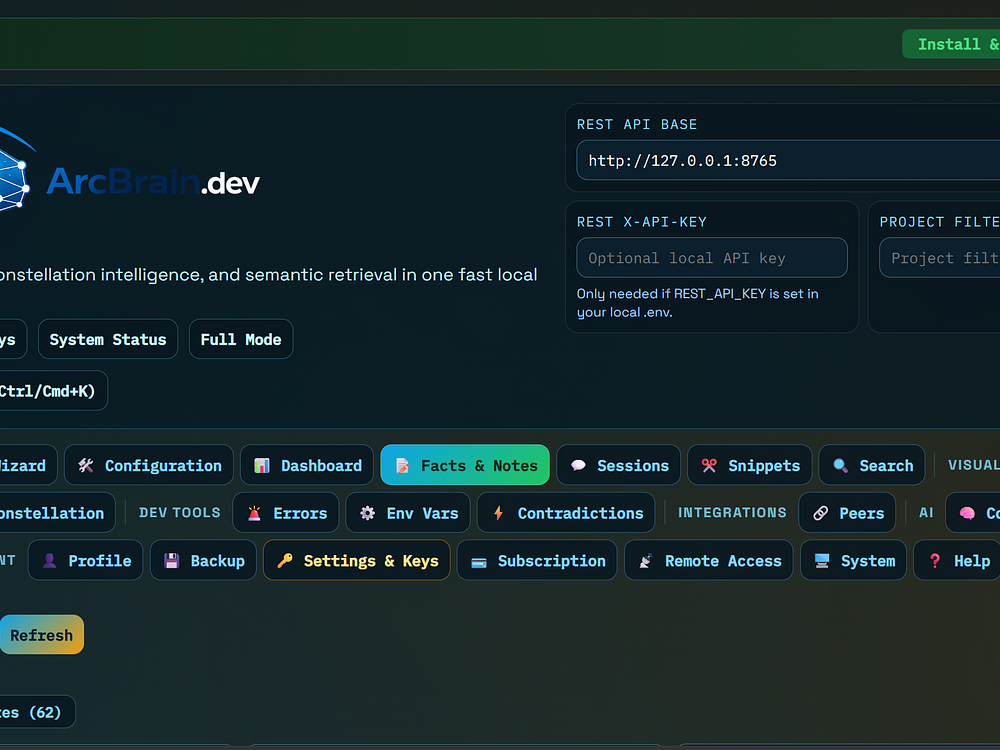

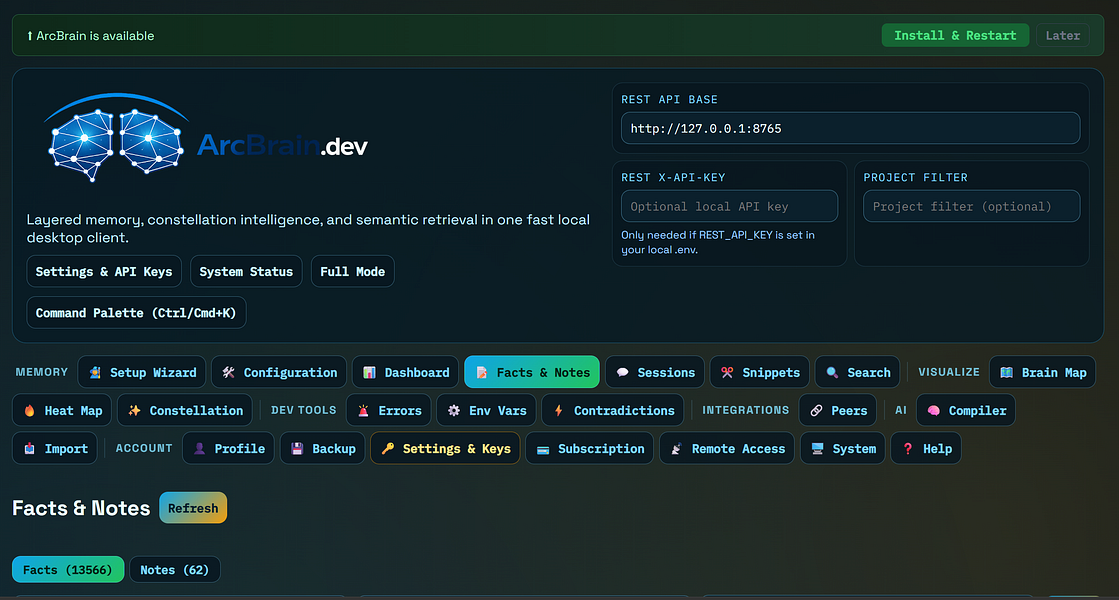

ArcBrain – Add persistent local memory to your AI coding assistant

ArcBrain – Add persistent local memory to your AI coding assistant: ArcBrain: Add persistent local memory to your AI coding | BetaList Back to all...

The goal is not to chase every launch. The goal is to decide whether this product category can save time, improve output quality, reduce manual work, or replace tools already in the stack.

Quick verdict

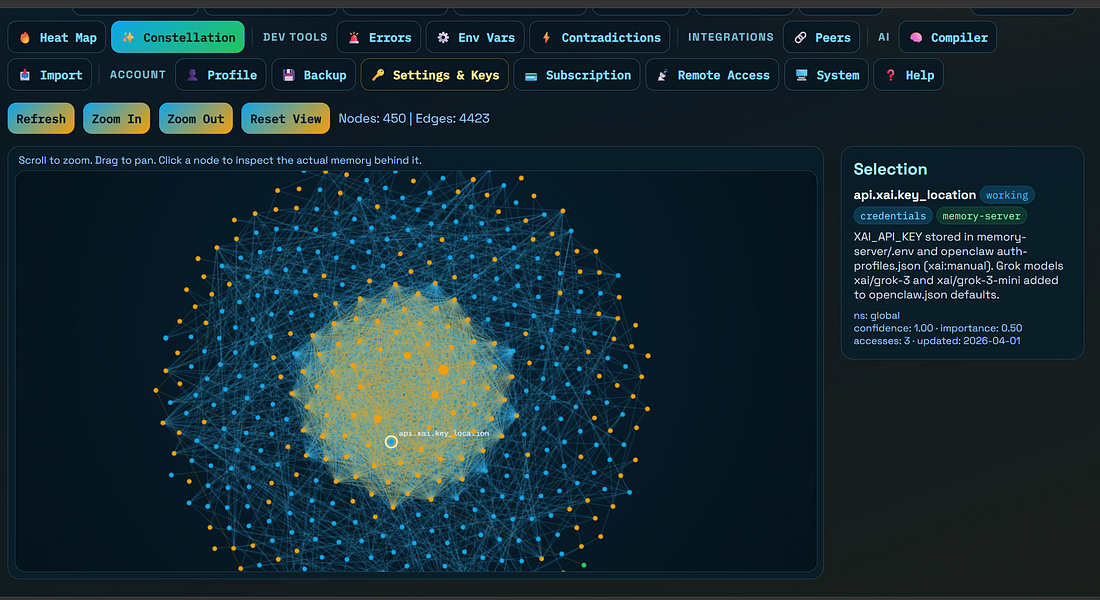

Bottom line: ArcBrain: Add persistent local memory to your AI coding | BetaList Back to all startups ArcBrain Add persistent local memory to your AI coding assistant Every time you start a new AI chat, it has no idea what you're building.

What the source highlights

- Key detail 1: ArcBrain: Add persistent local memory to your AI coding | BetaList Back to all startups ArcBrain Add persistent local memory to your AI coding assistant Every time you start a new AI chat, it has no idea what you're building.

- Comparison signal: Switch between programs like VS Code and Claude desktop, and Claude knows what you were just doing.

- Key detail 3: Every time you start a new AI chat, it has no idea what you're building.

Best for

This is most relevant for founders, creators, marketers, operators, sales teams, support teams, and small businesses comparing ai tool comparisons for real workflow gains.

Good-fit use cases usually include:

- Automation: repetitive work that currently depends on manual copy, research, or handoffs

- Output quality: content, analytics, customer communication, or internal operations that need faster execution

- Tool consolidation: several lightweight tools that could become one clearer workflow

- AI adoption: testing AI features before committing to a broader SaaS migration

Feature evaluation

When reviewing this tool or product category, focus on features that directly affect daily execution rather than impressive demos. The most useful comparison points are:

| Evaluation area | What to check |

|---|---|

| Core workflow | What job the tool completes from start to finish |

| Output quality | Whether results are reliable enough for professional use |

| Integrations | Whether it connects to systems the buyer already uses |

| Controls | Whether teams can manage prompts, permissions, brand rules, data, and approvals |

Comparison with alternatives

Compare this option against established AI tools, horizontal SaaS platforms, and manual workflows. A product is only worth recommending if it creates a clearer outcome than the alternatives readers already know.

Use this comparison checklist:

- Setup: ease of setup versus the learning curve

- AI quality: output quality versus editing effort

- Integrations: native integrations versus Zapier or manual exports

- Pricing: plan limits versus actual usage volume

- Switching cost: migration effort versus consolidation value

Pricing and buying signals

Before choosing a plan, check whether pricing is based on users, seats, credits, automation runs, AI usage, storage, or premium integrations. AI and SaaS pricing can look simple at first but become expensive when usage scales.

Pros and cons

Pros

- Useful for buyers actively comparing AI and SaaS tools

- Can reveal workflow gaps that existing software does not solve well

- Works well as part of a shortlist when paired with pricing and alternatives

Cons

- Launch announcements can move faster than real customer adoption

- Pricing, limits, and integrations may change quickly

- Some products overlap heavily with tools readers already use

Final recommendation

Shortlist this only if it solves a specific workflow better than the current tool stack. The best next step is to test one real use case, compare the result against two alternatives, and calculate whether the time saved or output improved justifies the subscription.

FAQ

Who should compare this type of tool?

Founders, operators, marketers, creators, and small teams that regularly evaluate AI and SaaS tools should compare it against both direct competitors and existing internal workflows.

What should I test before paying?

Check the core use case, pricing, integrations, data privacy, setup time, and whether the tool produces a repeatable outcome for your workflow.